Archive

The Optical Illusion That’s So Good, It Even Fools DanKam

We’ll return to the DNSSEC Diaries soon, but I wanted to talk about a particularly interesting optical illusion first, due to it’s surprising interactions with DanKam.

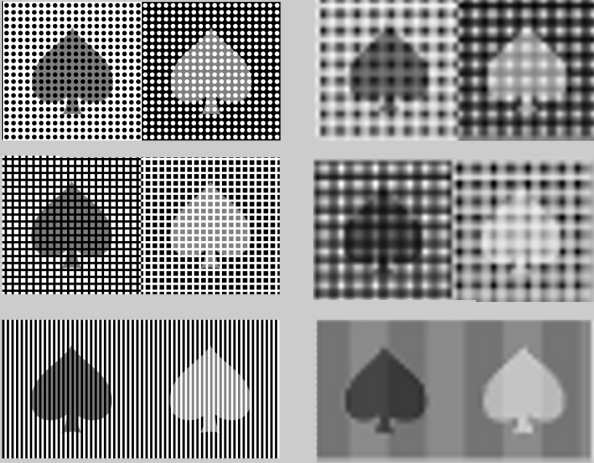

Recently on Reddit, I saw the following rather epic optical illusion (originally from Akiyoshi Kitaoka):

What’s the big deal, you say? The blue and green spirals are actually the same color. Don’t believe me? Sure, I could pull out the eyedropper and say that the greenish/blue color is always 0 Red, 255 Green, 150 Blue. But nobody can be told about the green/blue color. They have to see it, for themselves:

(Image c/o pbjtime00 of Reddit.)

So what we see above is the same greenish blue region, touching the green on top and the blue on bottom — with no particular seams, implying (correctly) that there is no actual difference in color and what is detected is merely an illusion.

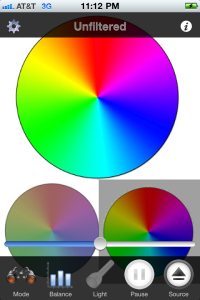

And the story would end there, if not for DanKam. DanKam’s an augmented reality filter for the color blind, designed to make clear what colors are what. Presumably, DanKam should see right through this illusion. After all, whatever neurological bugs cause the confusion effect in the brain, certainly were not implemented in my code.

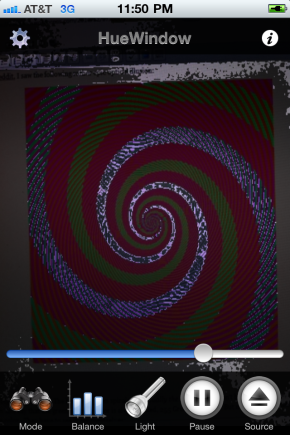

Hmm. If anything, the illusion has grown stronger. We might even be tricking the color blind now! Now we have an interesting question: Is the filter magnifying the effect, making identical colors seem even more different? Or is it, in fact, succumbing to the effect, confusing the blues and greens just like the human visual system is?

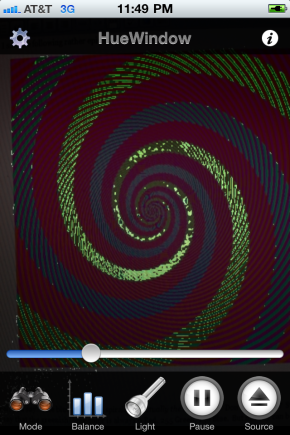

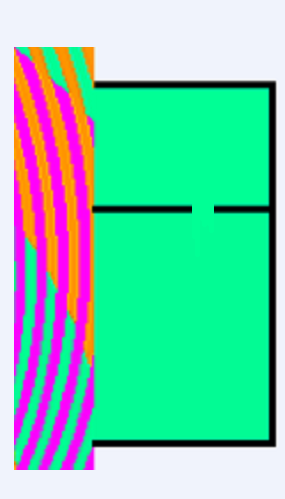

DanKam has an alternate mode that can differentiate the two. HueWindow mode lets the user select only a small “slice” of the color spectrum — just the blues, for example, or just the greens. It’s basically the “if all else fails” mode of DanKam. Lets see what HueWindow shows us:

The orange stripes go through the “green” spiral but not the “blue” one. So without us even knowing it, our brains compare that spiral to the orange stripes, forcing it to think the spiral is green. The magenta stripes make the other part of the spiral look blue, even though they are exactly the same color. If you still don’t believe me, concentrate on the edges of the colored spirals. Where the green hits the magenta it looks bluer to me, and where the blue hits the orange it looks greener. Amazing.

The overall pattern is a spiral shape because our brain likes to fill in missing bits to a pattern. Even though the stripes are not the same color all the way around the spiral , the overlapping spirals makes our brain think they are. The very fact that you have to examine the picture closely to figure out any of this at all shows just how easily we can be fooled.

See, that looks all nice and such, but DanKam’s getting the exact same effect and believe me, I did not reimplement the brain! Now, it’s certainly possible that DanKam and the human brain are finding multiple paths to the same failure. Such things happen. But bug compatibility is a special and precious thing from where I come from, as it usually implies similarity in the underlying design. I’m not sure what’s going on in the brain. But I know exactly what’s going on with DanKam:

There is not a one-to-one mapping between pixels on the screen and pixels in the camera. So multiple pixels on screen are contributing to each pixel DanKam is filtering. The multiple pixels are being averaged together, and thus orange (605nm) + turquoise (495nm) is averaged to green (550nm) while magenta (~420nm) + turquoise (495nm) is averaged to blue (457nm).

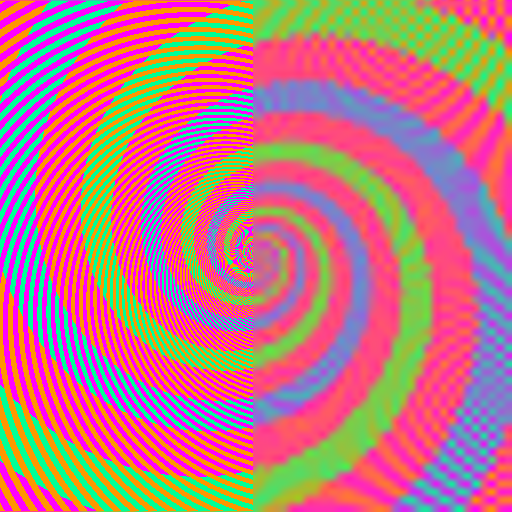

There is no question that this is what is happening with DanKam. Is it just as simple with the brain? Well, lets do this: Take the 512×512 spiral above, resize it down to 64×64 pixels, and then zoom it back up to 512×512. Do we see the colors we’d expect?

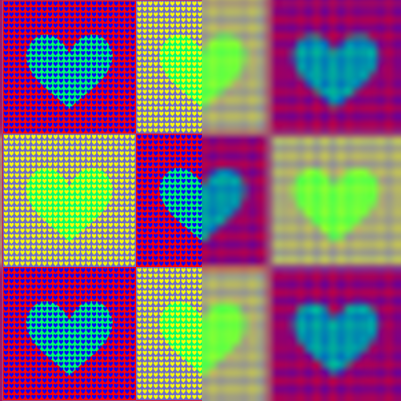

Indeed! Perhaps the brightness is little less than expected, but without question, the difference between the green and blue spirals is plain as day! More importantly, perceived hues are being recovered fairly accurately! And the illusion-breaking works on Akiyoshi Kitaoka‘s other attacks:

[YES. SERIOUSLY. THE HEARTS ARE ACTUALLY THE SAME COLOR — ON THE LEFT SIDE. THE RIGHT SIDE IS WHAT YOUR VISUAL SYSTEM IS REPORTING BACK.]

So, perhaps a number of illusions are working not because of complex analysis, but because of simple downsampling.

Could it be that simple?

No, of course not, the first rule of the brain is it’s always at least a little more complicated than you think, and probably more 🙂 You might notice that while we’re recovering the color, or chroma relatively accurately, we’re losing detail in perceived luminance. Basically, we’re losing edges.

It’s almost like the visual system sees dark vs. light at a different resolution than one color vs. another.

This, of course, is no new discovery. Since the early days of color television, color information has been sent with lower detail than the black and white it augmented. And all effective compressed image formats tend to operate in something called YUV, which splits the normal Red, Green, and Blue into Black vs. White, Red vs. Green, and Orange vs. Blue (which happen to be the signals sent over the optic nerve). Once this is done, the Black and White channels are transmitted at full size while the differential color channels are halved or even quartered in detail. Why do this? Because the human visual system doesn’t really notice. (The modes are called 4:2:2 or 4:1:1, if you’re curious.)

So, my theory is that these color artifacts aren’t the result of some complex analysis with pattern matching, but rather the normal downsampling of chroma that occurs in the visual system. Usually, such downsampling doesn’t cause such dramatic shifts in color, but of course the purpose of an optical illusion is to exploit the corner cases of what we do or do not see.

FINAL NOTE:

One of my color blind test subjects had this to say:

“Put a red Coke can, and a green Sprite can, right in front of me, and I can tell they’re different colors. But send me across the room, and I have no idea.”

Size matters to many color blind viewers. One thing you’ll see test subjects do is pick up objects, put them really close to their face, scan them back and forth…there’s a real desire to cover as much of the visual field as possible with whatever needs to be seen. One gets the sense that, at least for some, they see Red vs. Green at very low resolution indeed.

FINAL FINAL NOTE:

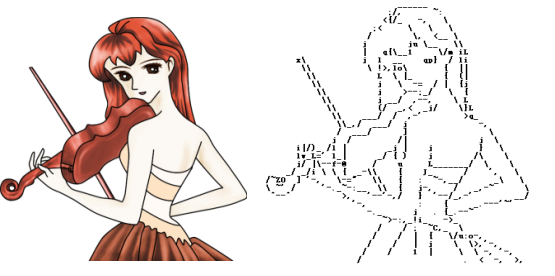

Just because chroma is downsampled, with hilarious side effects, doesn’t mean running an attack in luminance won’t work. Consider the following images, where the grey spade, seems to not always be the same grey spade. On the left, is Kitaoka’s original illusions. On the right, is what happens when you blur things up. Suddenly, the computer sees (roughly, with some artifacts) the same shades we do. Interesting…

A SLIGHT BIT MORE SPECULATION:

I’ve thought that DanKam works because it quantizes hues to a “one true hue”. But it is just as possible that DanKam is working because it’s creating large regions with exactly the same hue, meaning there’s less distortion during the zoom down…

Somebody should look more into this link between visual cortex size and optical illusions. Perhaps visual cortex size directly controls the resolution onto which colors and other elements are mapped onto? I remember a thing called topographic mapping in the visual system, in which images seen were actually projected onto a substrate of nerves in an accurate, x/y mapping. Perhaps the larger the cortex, the larger the mapping, and thus the stranger ?

I have to say, it’d be amusing if DanKam actually ended up really informing us re: how the visual system works. I’m just some hacker playing with pixels here… 😉

DanKam: Augmented Reality For Color Blindness

[And, we’re in Forbes!]

[DanKam vs. A Really Epic Optical Illusion]

[CNet]

So, for the past year or so, I’ve had a secret side project.

Technically, that shouldn’t be surprising. This is security. Everybody’s got a secret side project.

Except my side project has had nothing to do with DNS, or the the web, or TCP/IP. In fact, this project has nothing to do with security at all.

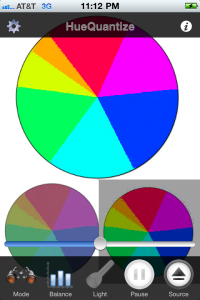

Instead, I’ve been working on correcting color blindness. Check it out:

These are the Ishihara test plates. You might be familiar with them.

If you can read the numbers on the left, you’re not color blind.

You can almost certainly read the numbers on the right. That’s because DanKam has changed the colors into something that’s easier for normal viewers to read, but actually possible for the color blind to read as well. The goggles, they do something!

Welcome to DanKam, a $3 app being released today on iPhone and Android (ISSUES WITH CHECKOUT RESOLVED! THIS CODE IS LIVE!). DanKam is an augmented reality application, designed to one of several unique and configurable filters to images and video such that colors — and differences between colors — are more visible to the color blind.

[EDIT: Some reviews. Wow. Wow.

@waxpancake: Dan Kaminsky made an augmented-reality iPhone app for the colorblind. And it *works*.

Oh, and I used it today in the real world. It was amazing! I was at Target with my girlfriend and saw a blue plaid shirt that I liked. She asked me what color it was so I pulled up DanKam and said “purple.” I actually could see the real color, through my iPhone! Thanks so much, I know I’ve said it 100x already, but I can’t say it another 100x and feel like I’ve said it enough. Really amazing job you did.

–Blake Norwood

I went to a website that had about 6 of those charts and screamed the number off every one of those discs! I was at work so it was a little awkward.

–JJ Balla, Reddit

Thank you so much for this app. It’s like an early Christmas present! I, too, am a color blind Designer/Webmaster type with long-ago-dashed pilot dreams. I saw the story on Boing Boing, and immediately downloaded the app. My rods and cones are high-fiving each other. I ran into the other room to show a fellow designer, who just happened to be wearing the same “I heart Color” t-shirt that you wore for the Forbes photo. How coincidental was that? Anyway, THANKS for the vision! Major kudos to you…

–Patrick Gerrity

Just found this on BoingBoing, fired up iTunes, installed and tried it. Perfectly done!

That will save me a lot of trouble with those goddamn multicolor LEDs! Blinking green means this, flashing red means that… Being color blind, it is all the same. But now (having DanKam in my pocket), I have a secret weapon and might become the master of my gadgetry again…Can’t thank you enough…!

–klapskalli

Holy shit. It works.

It WORKS. Not perfectly for my eyes, but still, it works pretty damned well.

I need a version of this filter I can install on my monitors and laptop, asap. And my tv. Wow.

That is so cool. Thanks for sharing this. Wish I could favorite it 1000 more times.

–zarq on Metafilter

As a colorblind person I just downloaded this app. How can I f*cking nominate you to the Nobel Prize committee? I am literally, almost in tears writing this, I CAN FRICKIN’ SEE PROPER COLORS!!!! PLEASE KEEP UP THIS WORK! AND CONTINUE TO REFINE THIS APP!

–Aaron Smith

At this point, you’re probably asking:

- Why is some hacker working on vision?

- How could this possibly work?

Well, “why” has a fairly epic answer.

So, we’re in Taiwan, building something…amusing…when we go out to see the Star Trek movie. Afterwords, one of the engineers mentions he’s color blind. I say to him, these fateful words:

“What did you think about the green girl?”

He responds, with shock: “There was a green girl??? I thought she was just tan!”

Lulz.

But I was not yet done with him. See, before I got into security, I was a total graphics nerd. (See: The Imagery archive on this website.) Graphics nerds know about things like colorspaces. Your monitor projects RGB — Red, Green, Blue. Printouts reflect CYMK — Cyan, Yellow, Magenta, blacK. And then there’s the very nice colorspace, YUV — Black vs. White, Orange vs. Blue, and Red vs. Green. YUV is actually how signals are sent back to the brain through the optic nerve. More importantly, YUV theoretically channelizes the precise area in which the color blind are deficient: Red vs. Green.

Supposedly, especially according to web sites that purport to simulate color blindness for online content, simply setting the Red vs. Green channel to a flat 50% grey would simulate nicely the effects of color blindness.

Alas, H.L. Mencken: “For every complex problem there is an answer that is clear, simple, and wrong.” My color blind friend took one look at the above simulation and said:

“Heh, that’s not right. Everything’s muddy. And the jacket’s no longer red!”

A color blind person telling me about red? That would be like a deaf person describing the finer points of a violin vs. a cello. I was flabbergasted (a word not said nearly enough, I’ll have you know).

Ah well. As I keep telling myself — I love being wrong. It means the world is more interesting than I thought it was.

There’s actually a lot of color blind people — about 10% of the population. And they aren’t all guys, either — about 20% of the color blind are female (it totally runs in families too, as I discovered during testing). But most color blind people are neither monochromats (seeing everything in black and white) or dichromats (seeing only the difference between orange and blue). No, the vast majority of color blind people are in fact what are known as anomalous trichromats. They still have three photoreceptors, but the ‘green’ receptor is shifted a bit towards red. The effect is subtle: Certain reds might look like they were green, and certain greens might look like they were red.

Thus the question: Was it possible to convert all reds to a one true red, and all greens to a one true green?

The answer: Yes, given an appropriate colorspace.

HSV, for Hue, Saturation, Value, is fairly obscure. It basically refers to a pattern of computing a “true color” (the hue), the relative proportion of that color to every other color (saturation), and the overall difference from darkness for the color (value). I started running experiments where I’d leave Saturation and Value alone, and merely quantize Hue.

This actually worked well — see the image at the top of the post! DanKam actually has quite a few features; here’s some tips for getting the most out of it:

- Suppose you’re in a dark environment, or even one with tinted lights. You’ll notice a major tint to what’s coming in on the camera — this is not the fault of DanKam, it’s just something your eyes are filtering! There are two fixes. The golden fix is to create your own, white balanced Light. But the other fix is to try to fix the white Balance in software. Depending on the environment, either can work.

- Use Source to see the Ishihara plates (to validate that the code works for you), or to turn on Color Wheel to see how the filters actually work

- Feel free to move the slider left and right, to “tweak” the values for your own personal eyeballs.

- There are multiple filters — try ’em! HueWindow is sort of the brute forcer, it’ll only show you one color at a time.

- Size matters in color blindness — so feel free to put the phone near something, pause it, and then bring the phone close. Also, double clicking anywhere on the screen will zoom.

- The gear in the upper left hand corner is the Advanced gear. It allows all sorts of internal variables to be tweaked and optimized. They allowed at least one female blue/green color blind person to see correctly for the first time in her life, so these are special.

In terms of use cases — matching clothes, correctly parsing status lights on gadgets, and managing parking structures are all possibilities. In the long run, helping pilots and truckers and even SCADA engineers might be nice. There’s a lot of systems with warning lights, and they aren’t always obvious to the color blind.

Really though, not being color blind I really can’t imagine how this technology will be used. I’m pretty sure it won’t be used to break the Internet though, and for once, that’s fine by me.

Ultimately, why do I think DanKam is working? The basic theory runs as so:

- The visual system is trying to assign one of a small number of hues to every surface

- Color blindness, as a shift from the green receptor towards red, is confusing this assignment

- It is possible to emit a “cleaner signal”, such that even colorblind viewers can see colors, and the differences between colors, accurately.

- It has nothing to do with DNS (I kid! I kid! But no really. Nothing.)

If there’s interest, I’ll write up another post containing some unexpected evidence for the above theory.

SIGGRAPH 2010

Well, I didn’t get to make it to SIGGRAPH this year — but, as always, Ke-Sen Huang’s SIGGRAPH Paper Archive has us covered. Here’s some papers that caught my eye:

The Frankencamera: An Experimental Platform for Computational Photography

Is there any realm of technology that moves faster than digital imaging? Consumer cameras are on a six month product cycle, and the level of imaging we’re getting out of professional gear nowadays absolutely (and finally) blows away what we were able to do with film. And yet, there is so much more possible. Much of the intelligence inside consumer and professional cameras is locked away. While there are some efforts at exposing scriptability (see CHDK for Canon consumer/prosumer cams), the really low level stuff remains just out of reach.

That’s changing. A group out of Stanford is creating the Frankencamera — a completely hackable, to the microsecond scale, base for what’s being referred to as Computational Photography. Put simply, CCDs are not film. They don’t require a shutter to cycle, their pixels do not need to be read simultaneously, and since images are actually fundamentally quite redundant (thus their compressibility), a lot can be done by merging the output of several frames and running extensive computations on them, etc. Some of the better things out of the computational photography realm:

- Flutter Shutter Photography: If you ever wanted “Enhance” a la CSI, this is how we’re going to get it.

- Light Field Photography With A Handheld Camera: What if you didn’t need to focus? What if you could capture all depths at once?

Getting these mechanisms out of the lab, and into your hands, is going to require a better platform. Well, it’s coming 🙂

Parametric Reshaping of Human Bodies in Images

Electrical Engineering has Alternating Current (AC) and Direct Current (DC). Computer Security has Code Review (human-driven analysis of software, seeking faults) and Fuzzing (machine-driven randomizing bashing of software, also seeking faults). And Computer Graphics has Polygons (shapes with textures and lighting) and Images (flat collections of pixels, collected from real world example). To various degrees of fidelity, anything can be built with either approach, but the best things often happen at the intersections of each tech tree.

In graphics, a remarkable amount of interesting work is happening when real world footage of humans is merged with an underlying polygonal awareness of what’s happening in three dimensional space. Specifically, we’re getting the ability to accurately and trivially alter images of human bodies. In the video above, and in MovieReshape: Tracking and Reshaping of Humans in Videos from SIGGRAPH Asia 2010, complex body characteristics such as height and musculature are being programmatically altered to a level of fidelity that the human visual system sees everything as normal.

That is actually somewhat surprising. After all, if there’s anything we’re supposed to be able to see small changes in, it’s people. I guess in the era of Benjamin Button (or, heck, normal people photoshopping their social networking photos) nothing should be surprising, but a slider for “taller” wasn’t expected.

Unstructured Video-Based Rendering: Interactive Exploration of Casually Captured Videos

In The Beginning, we thought it was all about capturing the data. So we did so, to the tune of petabytes.

Then we realized it was actually about exploring the data — condensing millions of data points down to something actionable.

Am I talking about what you’re thinking about? Yes. Because this precise pattern has shown up everywhere, as search, security, business, and science itself finds themselves absolutely flooded with data, but not quite so much as much knowledge of what to do with all that data. We’re doing OK, but there’s room for improvement, especially for some of the richer data types — like video.

In this paper, the authors extend the sort of image correlation research we’ve seen in Photosynth to video streams, allowing multiple streams to be cross-referenced in time and space. It’s well known that, after the Oklahoma City bombing, federal agents combed through thousands of CCTV tapes, using the explosion as a sync pulse and tracking the one van that had the attackers. One wonders the degree to which that will become automatable, in the presence of this sort of code.

Note: There’s actually a demo, and it’s 4GB of data!

Second note: Another fascinating piece of image correlation, relevant to both unstructured imagery and the unification of imagery and polygons, is here: Building Rome On A Cloudless Day.

In terms of “user interfaces that would make my life easier”, I must say, something that does seeking better than the “sequence of tiny freeze frames” (at best) or “just a flat bar I can click on” (at worst) into “a jigsaw puzzle of useful images” would be mighty nice.

(See also: Video Tapestries)

You know, some people ask me why I pay attention to pretty pictures, when I should be out trying to save the world or something.

Well, lets be honest. A given piece of code may or may not be secure. But the world’s most optimized algorithm for turning Anime into ASCII is without question totally awesome.

SIGGRAPH 2008: The Quest for More Pixels

So, last week, I had the pleasure of being stabbed, scanned, physically simulated, and synthetically defocused. Clearly, I must have been at SIGGRAPH 2008, the world’s biggest computer graphics conference. While it usually conflicts with Black Hat, this year I actually got to stop by, though a bit of a cold kept me from enjoying as much of it as I’d have liked. Still, I did get to walk the exhibition floor, and the papers (and videos) are all online, so I do get to write this (blissfully DNS and security unrelated) report.

SIGGRAPH brings in tech demos from around the world every year, and this year was no exception. Various forms of haptic simulation (remember force feedback?) were on display. Thus far, the best haptic simulation I’d experienced was a robot arm that could “feel” like it was actually 3 pounds or 30 pounds. This year had a couple of really awesome entrants. By far the best was Butterfly Haptics’ Maglev system, which somehow managed to create a small vertical “puck” inside a bowl that would react, instantaneously, to arbitrary magnetic forces and barriers. They actually had two of these puck-bowls side by side, hooked up to an OpenGL physics simulation. The two pucks, in your hand, became rigid platforms in something of a polygon playground. Anything you bumped into, you could feel, anything you lifted, would have weight. Believe it or not, it actually worked, far better than it had any right to. Most impressively, if you pushed your in-world platforms against eachother, you directly felt the force from each hand on the other, as if there was a real-world rod connecting the two. Lighten up a bit on the right hand, and the left wouldn’t get pushed quite so hard. Everything else was impressive but this was the first haptic simulation I’ve ever seen that tricked my senses into perceiving a physical relationship in the real world. Cool!

Also fun: This hack with ultrasonic transmitters by Takayuki Iwamoto et al, which was actually able to create free-standing regions of turbulence in air via ultrasonic interference. It really just feels like a bit of vibrating wind (just?), but it’s one step closer to that holy grail of display technology, Princess Leia.

Best cheap trick award goes to the Superimposing Dynamic Range (YouTube) guys. There’s just an absurd amount of work going into High Dynamic Range image capture and display, which can handle the full range of light intensities the human eye is able to process. People have also been having lots of fun projecting images, using a camera to see what was projected, and then altering the projection based on that. These guys went ahead and, instead of mixing a projector with a camera, they mixed it with a printer. Paper is very reflective, but printer toner is very much not, so they created a shared display out of a laser printout and its actively displayed image. I saw the effects on an X-Ray — pretty convincing, I have to say. Don’t expect animation anytime soon though 🙂 (Side note: I did ask them about e-paper. They tried it — said it was OK, but not that much contrast.)

Always cool: Seeing your favorite talks productized. One of my favorite talks in previous years was out of Stanford — Synthetic Aperture Confocal Imaging. Unifying the output of dozens of cheap little Quickcams, these guys actually pulled together everything from Matrix-style bullet time to the ability to refocus images — to the point of being able to see “around” occluding objects. So of course Point Grey Research, makers of all sorts of awesome camera equipment, had to put together a 5×5 array of cameras and hook ’em up over PCI express. Oh, and implement the Synthetic Aperture refocusing code, in realtime, demo’d at their booth, controlled with a Wii controller. Completely awesome.

Of course, some of the coolest stuff at SIGGRAPH is reserved for full conference attendees, in the papers section. One nice thing they do at SIGGRAPH however is ask everyone to create five minute videos of their research. This makes a lot of sense when what everyone’s researching is, almost by definition, visually compelling. So, every year, I make my way to Ke-Sen Huang’s collection of SIGGRAPH papers and take a look at the latest coming out of SIGGRAPH. Now, I have my own biases: I’ve never been much of a 3D modeler, but I started out doing a decent amount of work in Photoshop. So I’ve got a real thing for image based rendering, or graphics technologies that process pixels rather than triangles. Luckily, SIGGRAPH had a lot for me this year.

First off, the approach from Photosynth continues to yield Awesome. Dubbed “Photo Tourism” by Noah Snavely et al, this is the concept that we can take individual images from many, many different cameras, unify them into a single three dimensional space, and allow seamless exploration. After having far too much fun with a simple search for “Notre Dame” in Flickr last year, this year they add full support for panning and rotating around an object of interest. Beautiful work — I can’t wait to see this UI applied to the various street-level photo datasets captured via spherical cameras.

Speaking of cameras, now that the high end of photography is almost universally digital, people are starting to do some really strange things to camera equipment. Chia-Kai Liang et al’s Programmable Aperture Photography allows for complex apertures to be synthesized above and beyond just an open and shut circle, and Ramesh Raskar et al’s Glare Aware Photography evaded the megapixel race by filtering light by incident angle — a useful thing to do if you’re looking to filter glare that’s coming from inside your lens.

Another approach is also doing well: Shai Avidan and Ariel Shamir’s work on Seam Carving. Most people probably don’t remember, but when movies first started getting converted for home use, there was a fairly huge debate over what to do about the fact that movies are much wider (85% wider) than they are tall. None of the three solutions — Letterboxing (black bars on the top and bottom, to make everything fit), Pan and Scan (picking the “most interesting” square of video from the rectangular frame), or “Anamorphic” (just stretch everything) — made everyone happy, but Letterboxing eventually won. I wonder what would have happened if this approach was around. Basically, Avidan and Shamir find the “least energetic” line of pixels to either add or remove. Last year, they did this to photos. This year, they come out with Improved Seam Carving for Video Retargeting. The results are spookily awesome.

Speaking of spooky: Data-Driven Enhancement of Facial Attractiveness. Sure, everything you see is photoshopped, but it’s pretty astonishing to see this automated. I wonder if this is going to follow the same path as Seam Carving, i.e. photo today, video tomorrow.

Indeed, there’s something of a theme going on here, with video becoming inexorably easier and easier to manipulate in a photorealistic manner. One of my favorite new tricks out of SIGGRAPH this year goes by the name of Unwrap Mosaics. The work of Microsoft’s Pushmeet Kohli, this is nothing less than the beginning of Photoshop’s applicability to video — and not just simple scenes, but real, dynamic, even three dimensional motion. Stunning work here.

It’s not all about pixels though. A really fun paper called Automated Generation of Interactive 3D Exploded View Diagrams showed up this year, and it’s all about allowing complex models of real world objects to be comprehended in their full context. It’s almost more UI than graphics — but whatever it is, it’s quite cool. I especially liked the moment they’re like — heh, lets see if this works on a medical model! Yup, works there too.

As mentioned earlier, the SIGGRAPH floor was full of various devices that could assemble a 3D model (or at least a point cloud) of any small object they might get pointed at. (For the record, my left hand looks great in silver triangles.) Invariably, these devices work like a sort of hyperactive barcode scanner, monitoring how long it takes for the red beam to return to a photodiode. But here’s an interesting question: How do you scan something that’s semi-transparent? Suddenly you can’t really trust all those reflections, can you? Clearly, the answer is to submerge your object in fluorescent liquid and scan it with a laser tuned to a frequency that’ll make its surroundings glow. Clearly. Flurorescent Immersion Range Scanning, by Matthias Hullin and crew from UBC, is quite a stunt.

So you might have heard that video cards can do more than just push pretty pictures. Now that Moore’s Law is dead (how long have we been stuck with 2Ghz processors?), improvements in computational performance have had to come from fundamentally redesigning how we process data. GPU’s have been one of a couple of players (along with massive multicore x86 and FPGA’s) in this redesign. Achieving greater than 50x speed improvements over traditional CPU’s on non-graphics tasks like, say, cracking MD5 passwords, they’re doing OK in this particular race. Right now, the great limiter remains the difficulty programming the GPU’s — and, every month, something new comes to make this easier. This year, we get Qiming Hiu et al’s BSGP: Bulk-Synchronous GPU Programming. Note the pride they have with their X3D parser — it’s not just about trivial algorithms anymore. (Of course, now I wonder when hacking GPU parsers will be a Black Hat talk. Short answer: Probably not very long.)

Finally, for sheer brainmelt, Towards Passive 6D Reflectance Field Displays by Martin Fuchs et al is just weird. They’ve made a display that’s view dependent — OK, well, lenticular displays will show you different things from different angles. Yeah, but this display is also illumination dependent — meaning, it shows you different things based on lighting. There’s no electronics in this material, but it’ll always show you the right image with the right lighting to match the environment. Weird.

All in all, a wonderfully inspiring SIGGRAPH. After being so immersed in breaking things, it’s always fun to play with awesome things being built.

Best Thing Ever

Fake Dan Kaminsky is the best thing evar. Mad bonus points for the Root Server Gas Pump. I can’t even wrap my mind around how many shots I owe my crew right about now.

I Like Big Graphs And I Cannot Lie

You other hackers can’t deny…when a packet routes in with an itty bitty length and a huge string in your face you get sick…cuz you’ve fuzzed that trick…

h0h0h0! I bring Christmas presents! Lots of new Sony data is about to drop, but for now please enjoy Xovi, my streaming graph visualization framework. Based on the excellent Boost Graph Library, and written with the generous assistance of Boost’s Doug Gregor, Xovi is my attempt to make complex networks at least partially comprehensible. There’s lots of work left to do — but, oooh. Pretty.

Not-MD5 Imagery

Wow, I need a better CMS. Thinking of Drupal, though apparently Mambo is

fairly cool as well. I’m open to suggestions, and I might be willing to throw Pizza/Tequila/Defcon passes out at someone who gets this monkey off my back.

Actually, the big risk of having good content management is that

you’ll find yourself coding less and whinging about other people’s code more.

So somehow I’ll need to enforce some sort of ratio system…something like a

minimum LoC/LoW ratio. Maybe I can even synthesize a graphical representation

of said ratio…

Anyway, lots of people have been asking for pictures I’ve taken of them. So I’ll go ahead and push a few links out, thus satisfying my site admin who’s

horrified that I haven’t been maintaining my Gooooooooooooooogle Rank…

- RSA 2005

- Codecon 2005 (GNU Radio!)

- Shmoocon 2005

- Rest of my pics

MD5 Imagery

Just because we don’t have access to Wang’s attack on MD5 doesn’t mean we

can’t seek out new and amusing ways to reverse engineer it…some interesting

pictures, as Wang’s payloads propagate through an MD5 hash in a bit-visualized

environment:

- Wang’s vec1 (PNG) (TXT)

- Wang’s vec2 (PNG) (TXT)

- Difference between vec1 and vec2.

- Difference, animated.

The last line is the bit-representation of the final MD5 hash. This is

trivially inspired by Greg Rose et al’s musing on MD5 (very fine paper). Some others which have crossed my

email:

- Practical Attacks on Digital Signatures Using MD5 Message Digest (A near collision discussing MD5 collisions. Ha!)

- What’s the worst that could happen? Eric Rescorla’s take on crypto vulnerabilities. He’s actually rather surprised how much MD5’s failures don’t break things, purely accidentally even. For instance, using the MD5 attack against certificates isn’t going to work anytime soon.

C’est Graphique 2002

Considering everything I’ve been up to for the last couple of

months, you’d think I’d be satisfied. But alas, there was indeed

one event I had to skip — SIGGRAPH 2002, in (I believe) San Antonio.

Hmmm? A network/security geek, mourning a missed SIGGRAPH?

Not so surprising. I started out in Graphics, before meandering

through Web Design, User Interfaces, Emergency Windows Repair,

Unix Admin, Security, Low Level Networking…heh, and whatever comes

next. But after attending SIGGRAPH 2001, and seeing the Ferrofluid

Masterpiece,

Protrude, Flow live, I remembered exactly what attracted me

to graphics in general and SIGGRAPH in particular.

Nothing like your brain calling bullshit on your eyes to wake

you up in the morning.

Anyway, there was some genuinely incredible stuff at SIGGRAPH this

year that, surprisingly enough, I never saw much mention of after

the show. (As it turns out, my absolute favorite piece of work —

the one I myself have become an avid user of — doesn’t even show up

on Google!) This is shocking, to

the point that I’m actually going to bother to report on something

like four months after the fact just because, well, it’s just that

impressive.

The definitive, though incomplete archive

of papers can be found

here; I’ve decided to write about a few of the things that surprised/impressed me. Note, I’m

heavily biased towards those papers that I could actually download the

associated videos of, so very cool sounding things (like

raytracing with pixel shaders) couldn’t really be checked out. Oh well.

Click here for the review (it used to be attached directly, but it got a bit too long

for the front page).

Behold, The Volumetric Canvas!

Ever since the late 3Dfx revolutionized consumer PC hardware — and by revolutionized, I mean

“was completely without peer for over two years” — it’s been clear that specialized ASICs

(Application Specific Integrated Circuits) can, in certain instances, utterly wipe the floor

with General Purpose processors — even with Moore’s

Death March fully in place. I doubt even a Pentium 4 can match 3Dfx’s first product when it comes

to the bilinear filtering of even a moderate number of polygons!

The story continues, though. 3Dfx was supplanted, and eventually purchased outright,

by nVidia…and here’s where things get interesting:

Those circuits, ever so specialized, once only barely programmable via register combiners,

have grown in power and flexibility. They’re becoming…if not general

purpose, no longer fixed function. NV20 — embedded in the GeForce 3 and the X-Box —

retains the capacity to execute small but powerful pixel and vertex programs against anything

streaming out the pipe. The specialized have gone general — what’s old is new again.

And interesting things are coming because of it.

Check this out: At SIGGRAPH 2002, Christof Rezk-Salama released

OpenQVIS, his implementation

of the techniques in his doctoral thesis:

Volume Rendering Techniques for General Purpose Graphics Hardware.

What’s this? Check out the following renderings:

|

|

|

|

|

Three things are important to realize about those images: First, the hardware used

to render them was built to render polygons, not MRI data. Second, if you’ve got an X-Box in

your living room, you already own the requisite silicon. Finally, those images render in realtime, somewhere

between 10 and 30FPS. Relative to software performance, that’s the same kind of boost to volumetric rendering

as we saw hardware provide to the polygon thrash — not bad, considering the once fixed-function hardware was never

intended to provide this service!

Now, Rezk-Salama isn’t the first to be doing such work.

It was, after all, Klaus Engel’s

Pre-Integrated Volume Renderer that introduced me to realtime volumetric rendering, not to mention

OpenQVIS itself. Klaus’s work is excellent, but it’s OpenQVIS that has me really excited. It’s complete,

mature, cross platform through the Qt toolkit, and Open Source. It’s trivial to generate data for,

and it’s fast. So, we’ve got a way to directly render arbitrary 3D matrixes. What will you do with

it? Keep me posted 🙂

Local Mirror

OpenQVIS (Source Code, GPL License)

OpenQVIS (Win32 Binary, Probably Requires DX8 Pixel Shaders)

Bonsai Tree

CT Scan of a Head(Large)

CT Scan of a Head(Small)

Engine

Inner Ear

MRI of a Head

Teddy Bear!

Temporal Bone

“Volume Rendering Techniques for General Purpose Graphics Hardware”, Christof Rezk-Salama